Difference between revisions of "Sculpted Prims: Technical Explanation"

Kidd Krasner (talk | contribs) m (→Pixel Format: Spelling, punctuation and one idiom fix.) |

(→Rendering in Second Life viewer: Updating the lossless issue to VWR-2404 (from VWR-866)) |

||

| Line 22: | Line 22: | ||

This analysis is based on 1.16.0(5) source and some tests. | This analysis is based on 1.16.0(5) source and some tests. | ||

'''Warning:''' Textures are stored using lossy JPEG2000 compression. If the texture is sized 64x64 or below the compression artifacts are numerous and very bad, once you take 128x128 they are still there but greatly diminish. Therefore for now 128x128 will give you much better results even though this is a serious memory waste. Note that going beyond 128x128 will give you little benefit so please don't! This issue is in JIRA as | '''Warning:''' Textures are stored using lossy JPEG2000 compression. If the texture is sized 64x64 or below the compression artifacts are numerous and very bad, once you take 128x128 they are still there but greatly diminish. Therefore for now 128x128 will give you much better results even though this is a serious memory waste. Note that going beyond 128x128 will give you little benefit so please don't! | ||

'''Update:''' Lossless JPEG2000 compression is implemented, but does not upload all images correctly. This issue is in JIRA as [http://jira.secondlife.com/browse/VWR-2404 VWR-2404]; please vote for it to support fixing this feature. | |||

'''Important:''' When we refer to the texture in terms of how the viewer code sees it, the first row (0) is the bottom row of your texture. For the columns they are left to right. | '''Important:''' When we refer to the texture in terms of how the viewer code sees it, the first row (0) is the bottom row of your texture. For the columns they are left to right. | ||

| Line 49: | Line 50: | ||

Note that the next levels of detail are 17x17 vertices and 9x9 vertices which are taken in the same way as above but the texture will be interpolated first. The textures are scaled down (using interpolation) with the same degree as the mesh. Hence a 32x32 texture at lowest detail ends up being an 8x8 while a 512x512 would end up being a 128x128. You can only predefine how your sculpture will look for each LOD by having blocks of similarly colored pixels. Make sure the most important pixels are positioned on those positions that are used at every LOD. | Note that the next levels of detail are 17x17 vertices and 9x9 vertices which are taken in the same way as above but the texture will be interpolated first. The textures are scaled down (using interpolation) with the same degree as the mesh. Hence a 32x32 texture at lowest detail ends up being an 8x8 while a 512x512 would end up being a 128x128. You can only predefine how your sculpture will look for each LOD by having blocks of similarly colored pixels. Make sure the most important pixels are positioned on those positions that are used at every LOD. | ||

The quality of your final result is also determined by the JPEG compression. Read the warning at the start of this section and please vote for VWR- | The quality of your final result is also determined by the JPEG compression. Read the warning at the start of this section and please vote for VWR-2404 on JIRA. | ||

==Examples== | ==Examples== | ||

Revision as of 11:58, 29 September 2007

Introduction

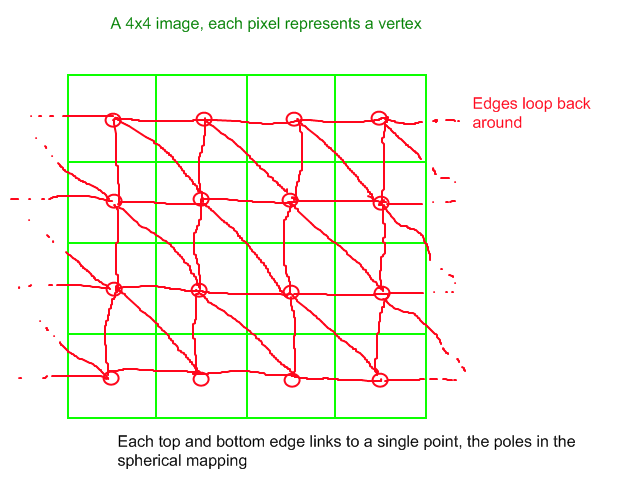

Sculpted prims are three dimensional meshes created from textures. Each texture is a mapping of vertex positions, where at full resolution each pixel would be one vertex, this can be less due to sampling (read below how the Second Life viewer treats your data). Each row of pixels (vertices) links back to itself, and for every block of four pixels two triangles are formed. At the top and bottom the vertices link to their respective pole.

Pixel Format

The alpha channel (if any) in position maps is currently unused, so we have 24 bits per pixel giving us 8 bits per color channel, or values from 0-255. Each color channel represents an axis in 3D space, where red, green, and blue map to X, Y, and Z respectively. If you're mapping from other software, keep in mind that not all 3D software will name their axes the same as SL does. If you have a different default orientation in SL than in your designer, compare the axes and swap where appropriate.

The color values map to an offset from the origin of the object <0,0,0>, with values less than 127 being negative offsets and values greater than 127 being positive offsets. An image that was entirely <127,127,127> pixels (flat gray) would represent a single dot in space at the origin.

A sculpted prim intrinsically has a size of one meter, so the color values from 0-255 map to offsets from -0.5 to 0.5 meters. Combined with the scale vector that all prims in Second Life possess, sculpted prims have the same maximum dimensions as regular procedural prims (10 meter diameter).

Texture Mapping

The position map of a sculpted prim also doubles as the UV map, describing how a texture will wrap around the mesh. The image is already an explanation of what vertices correlate to what pixel, which is used to generate UV coordinates for vertices as they are created. This presents a big advantage for texture mapping over procedural prims created in Second Life because you can do all of your texturing in a 3D modeling program such as Maya, and when you export the position map you know the UV coordinates will be exactly preserved in Second Life.

Rendering in Second Life viewer

This analysis is based on 1.16.0(5) source and some tests.

Warning: Textures are stored using lossy JPEG2000 compression. If the texture is sized 64x64 or below the compression artifacts are numerous and very bad, once you take 128x128 they are still there but greatly diminish. Therefore for now 128x128 will give you much better results even though this is a serious memory waste. Note that going beyond 128x128 will give you little benefit so please don't! Update: Lossless JPEG2000 compression is implemented, but does not upload all images correctly. This issue is in JIRA as VWR-2404; please vote for it to support fixing this feature.

Important: When we refer to the texture in terms of how the viewer code sees it, the first row (0) is the bottom row of your texture. For the columns they are left to right.

The Second life viewer will at it's highest level of detail render a grid of vertices in the following form:

- 1 top row of 33 vertices all mapped to a pole

- 31 rows of 33 vertices taken from your texture

- 1 bottom row of 33 vertices all mapped to a pole

For each row the 33th vertex is the same as the 1st, this stitches the sculpture at the sides. The poles are determined by taking the pixel on width/2 on the first and last row of your texture. (In a 64x64 texture that would be the 33rd pixel as we start counting columns from 0).

For example, a sculpture at the highest level of detail Second Life supports requires a mesh of 33 x 33 vertices If we have a 64x64 texture, this grid of vertices is sampled from the texture at the points:

(32,0) (32,0) (32,0) ... (32,0) (32,0) (32,0) (0,2) (2,2) (4,2) ... (60,2) (62,2) (0,2) . . . . . . (0,60) (2,60) (4,60) ... (60,60) (62,60) (0,60) (0,62) (2,62) (4,62) ... (60,62) (62,62) (0,62) (32,63) (32,63) (32,63) ... (32,63) (32,63) (32,63)

It is recommended to use 64x64 images. In theory 32x32 should give you the same quality but current code will not use your last (top!) row of your texture both as vertices and as the row to get the pole from. It will incorrectly trigger generation of a pole 1 row early and you'll hence get your 2 last rows of vertices filled with the pole.

Note that the next levels of detail are 17x17 vertices and 9x9 vertices which are taken in the same way as above but the texture will be interpolated first. The textures are scaled down (using interpolation) with the same degree as the mesh. Hence a 32x32 texture at lowest detail ends up being an 8x8 while a 512x512 would end up being a 128x128. You can only predefine how your sculpture will look for each LOD by having blocks of similarly colored pixels. Make sure the most important pixels are positioned on those positions that are used at every LOD.

The quality of your final result is also determined by the JPEG compression. Read the warning at the start of this section and please vote for VWR-2404 on JIRA.

Examples

A pack of sculpture maps available for download: sculpt-tests.zip

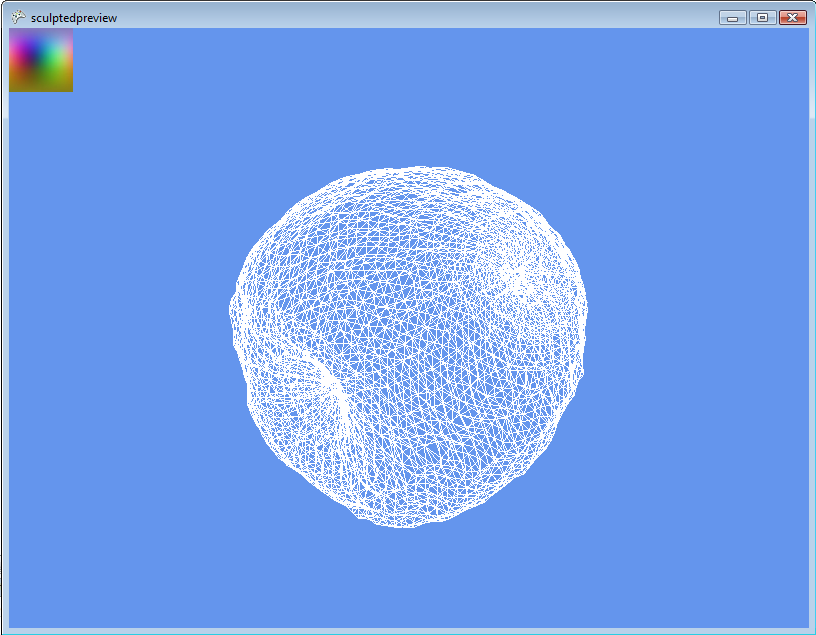

An apple sculpt shown in the sculptedpreview program

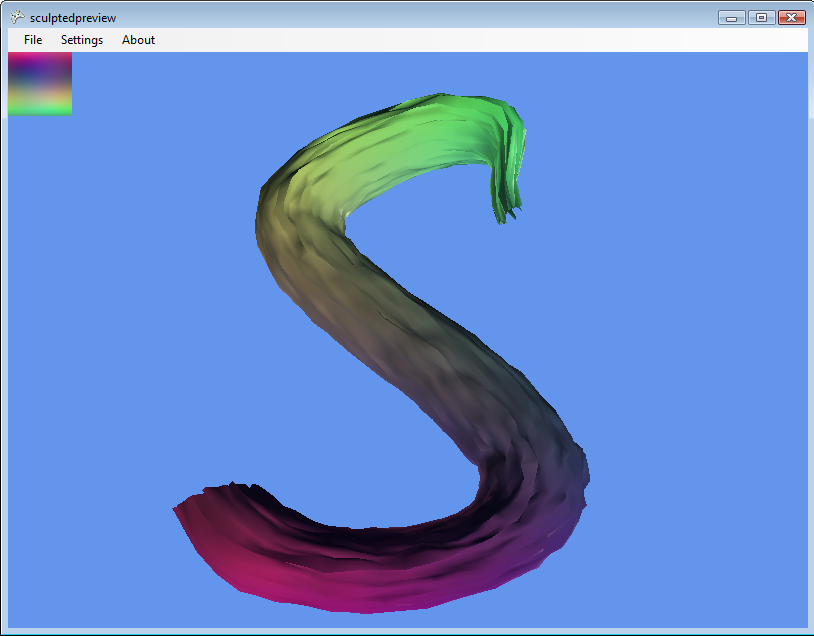

An S-shaped sculpture shown in the latest version of sculptedpreview, with the sculpture map also applied as the texture map